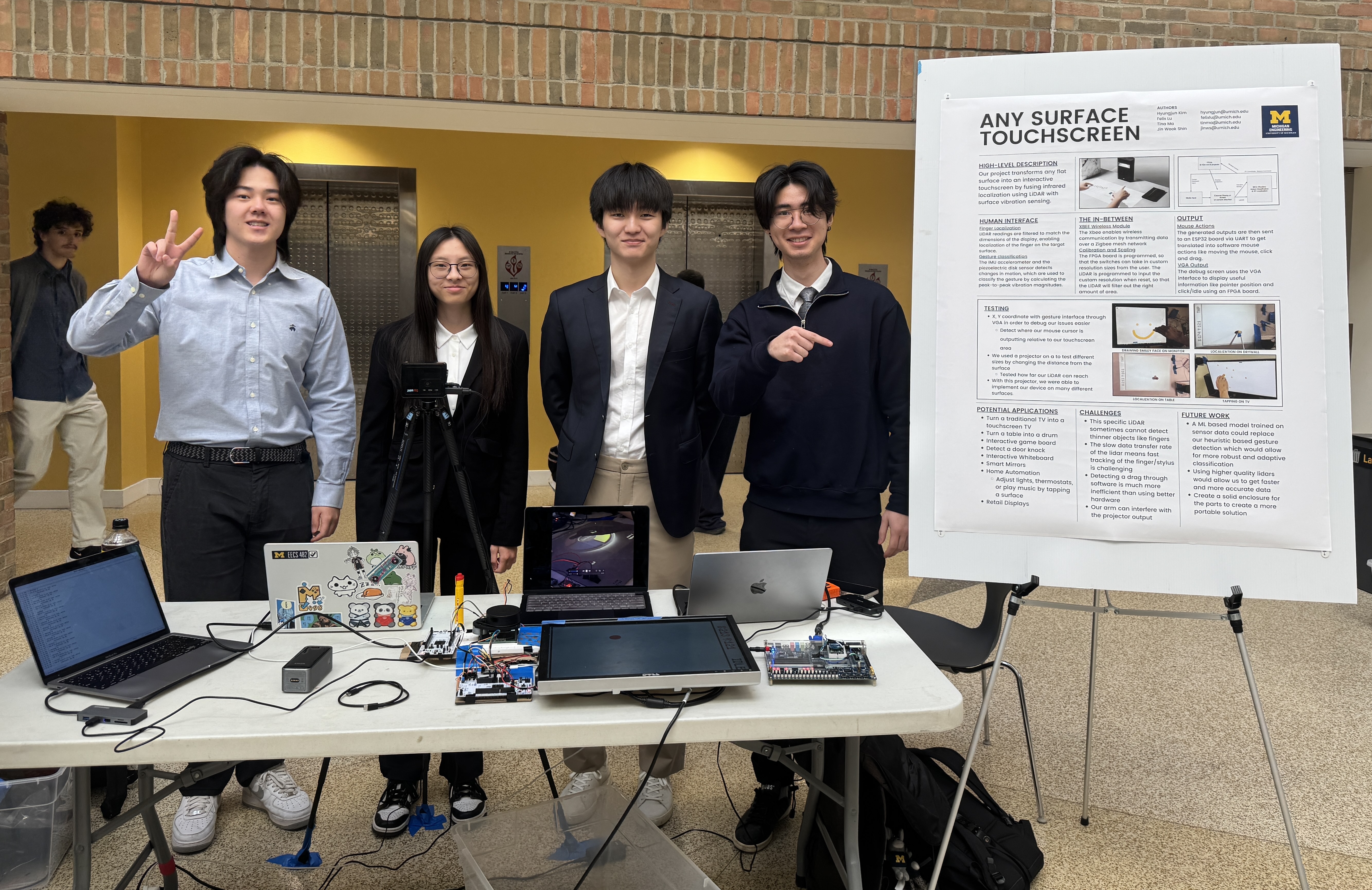

April 24, 2025

Any Surface Touchscreen

Any Surface Touchscreen is a system that transforms arbitrary flat surfaces into interactive input interfaces by combining spatial localization and vibration-based sensing. By estimating both the position and type of user interaction, the system enables touch-like input on everyday surfaces without requiring dedicated touch hardware.

I led the ideation of the project, drawing inspiration from SAW sensing research and formulating the system concept. I implemented the STM32-based LiDAR interface and sensor integration pipeline, and contributed to debugging the FPGA-based display system.

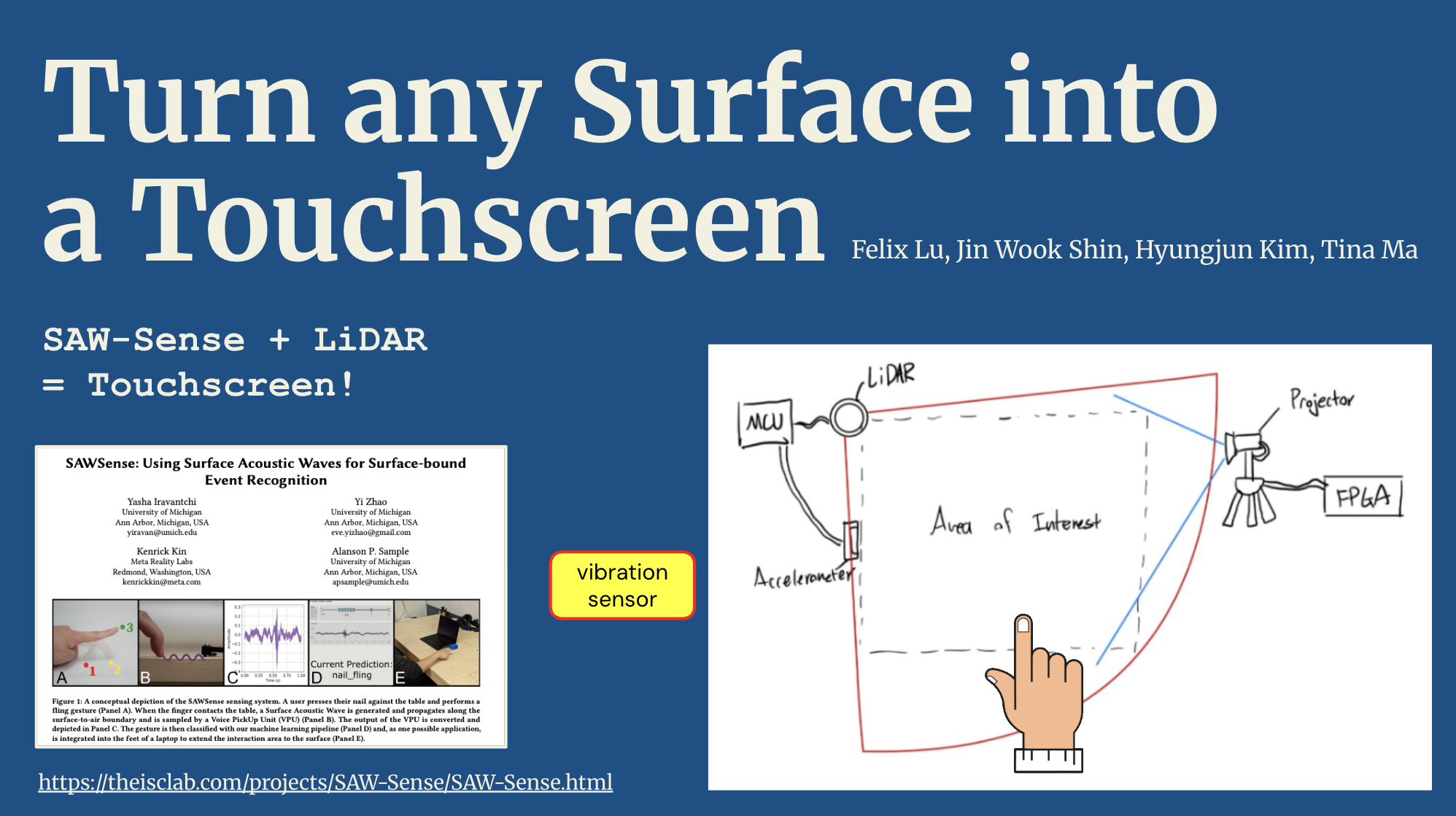

Ideation

This project originated from my interest in interactive sensing systems prior to joining my current research lab (ISC Lab). I was particularly inspired by work such as SAWSense, which uses surface acoustic wave signals for activity recognition. Building on this idea, I explored whether combining interaction localization with surface sensing could generalize beyond recognition to full input systems—ultimately leading to the concept of turning any surface into a touchscreen...! While the final system required a stylus-like object due to sensing limitations (our lidar wasn't good=expensive enough), the core idea of spatially-aware surface interaction was successfully validated.

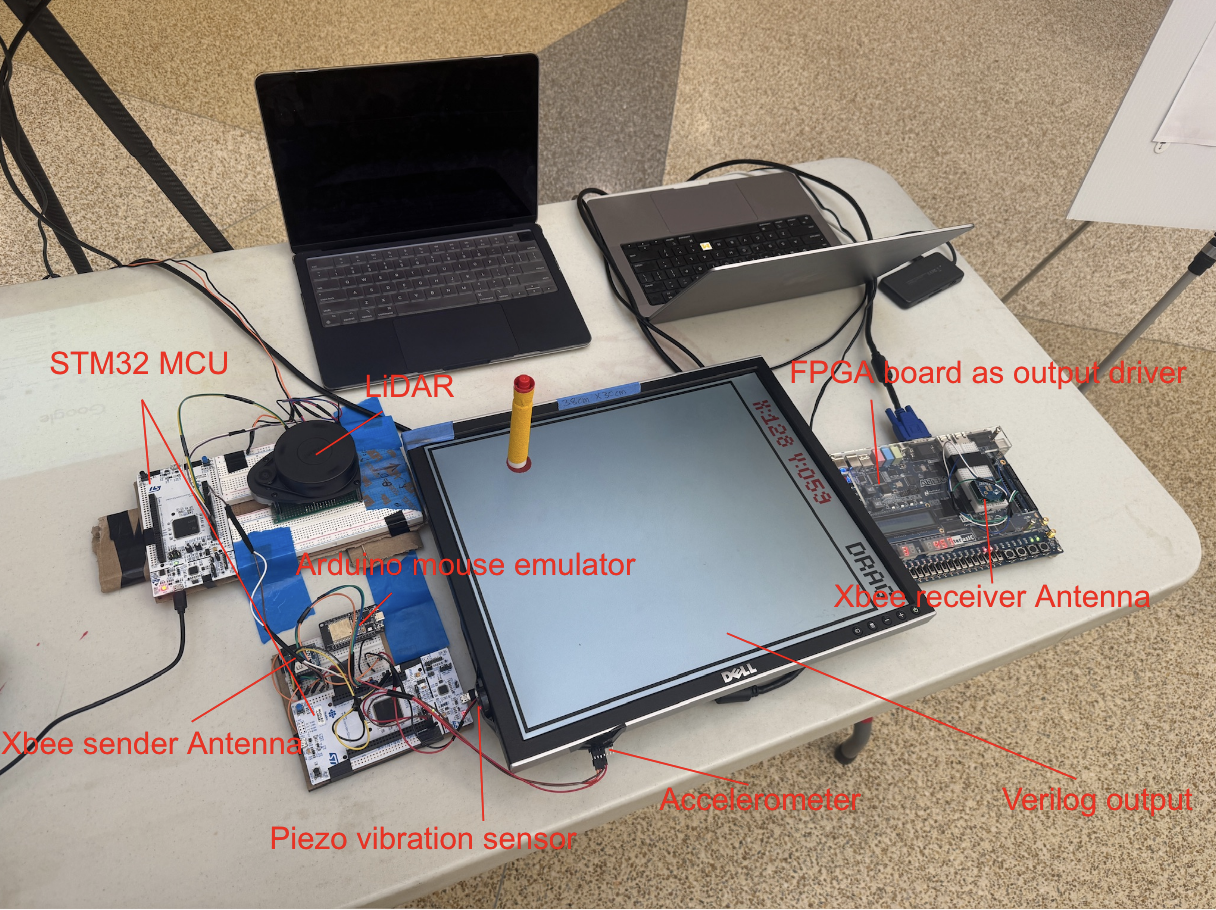

Hardware Overview

The system integrates multiple hardware components to enable sensing, processing, and output. A LiDAR sensor and IMU are used for interaction localization and motion detection, interfaced through an STM32 Nucleo board responsible for low-level sensor control and data processing. An FPGA drives an external display via VGA to provide real-time visual feedback mapped to the interaction surface. Additional modules, including an ESP32 and Bluetooth communication, enable connectivity to external devices, allowing the system to function as a general-purpose input interface for controlling computers and applications.

Project Video